Boston House Price Analysis

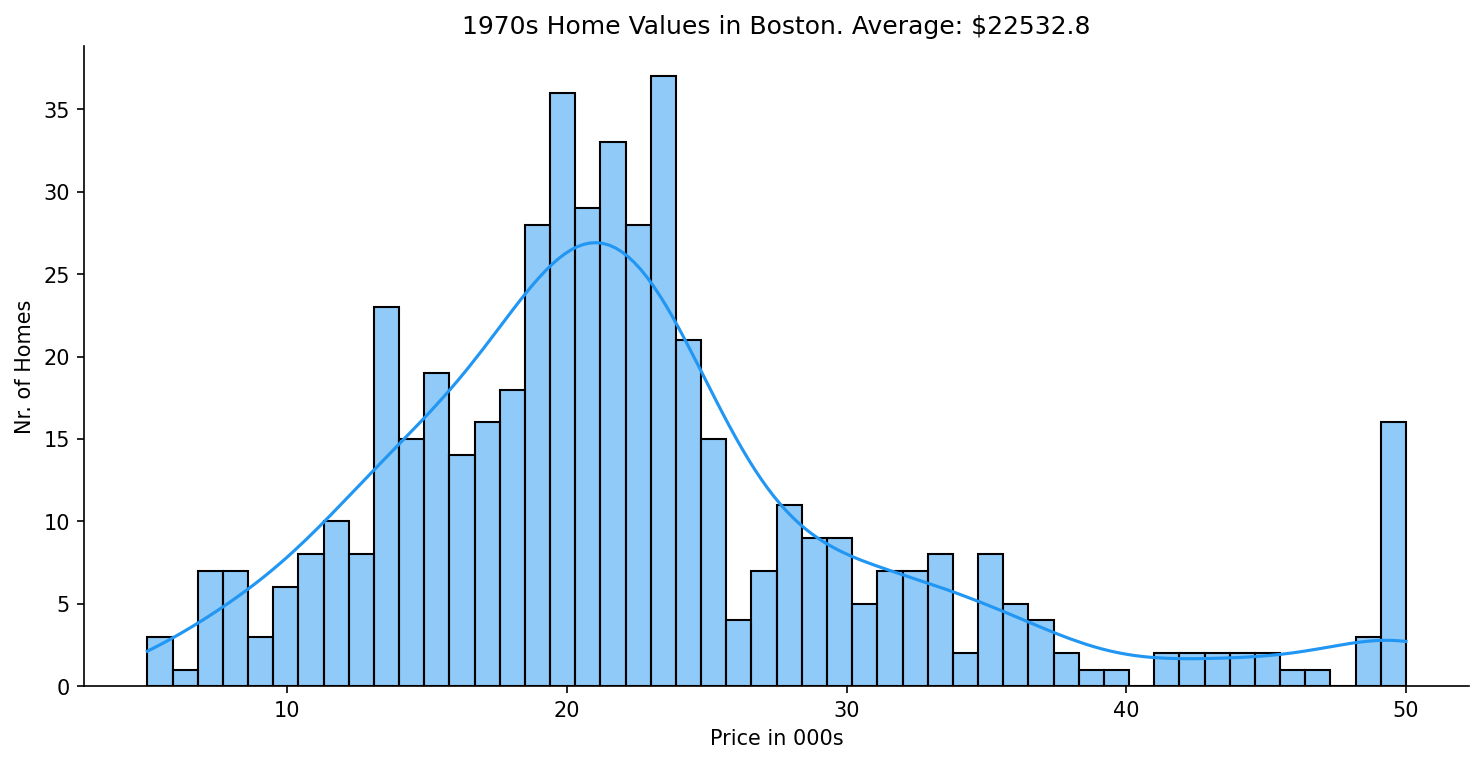

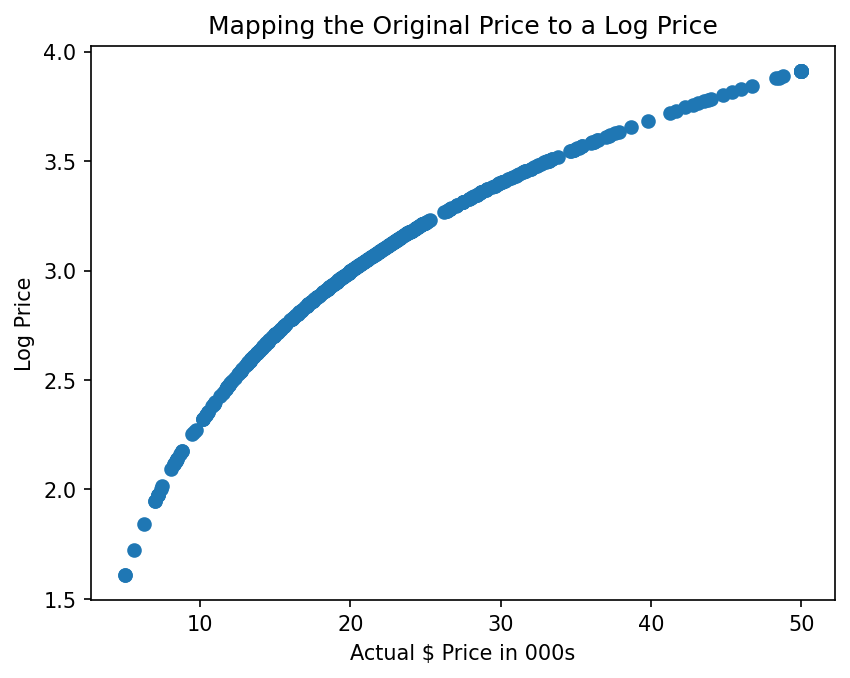

This project builds a multivariable linear regression model on the classic Boston Housing Dataset (506 census tracts, 13 features) to estimate median residential property values. It covers the full data science workflow: exploratory analysis and visualisation, train/test splitting, model fitting, residual diagnostics, and a working valuation function that returns a dollar estimate for any given property configuration. The log transformation step takes the model from r² 0.67 to 0.74 on held-out data — a concrete, measurable improvement that came from actually reading the residuals rather than just accepting the first fit.

Quick Facts

Overview

Problem

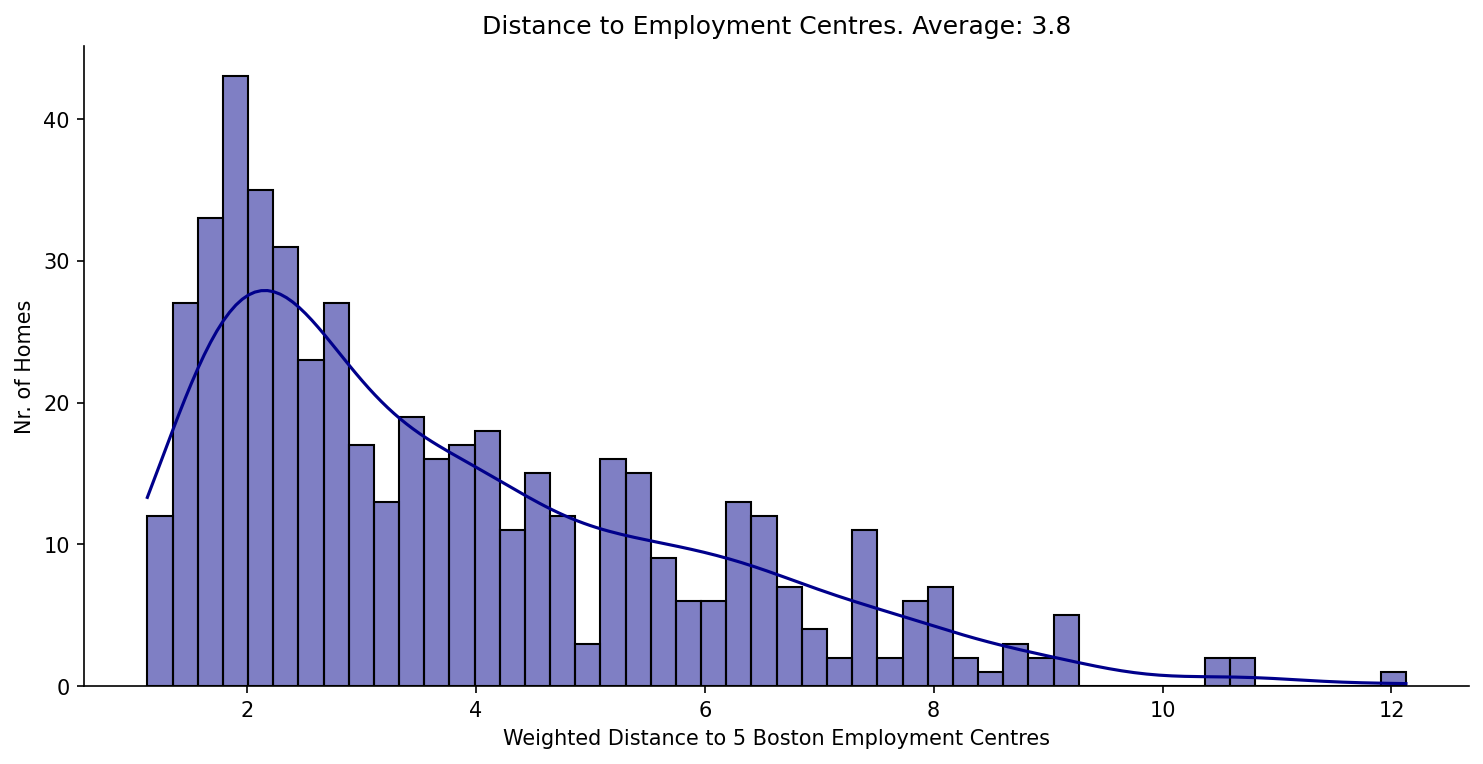

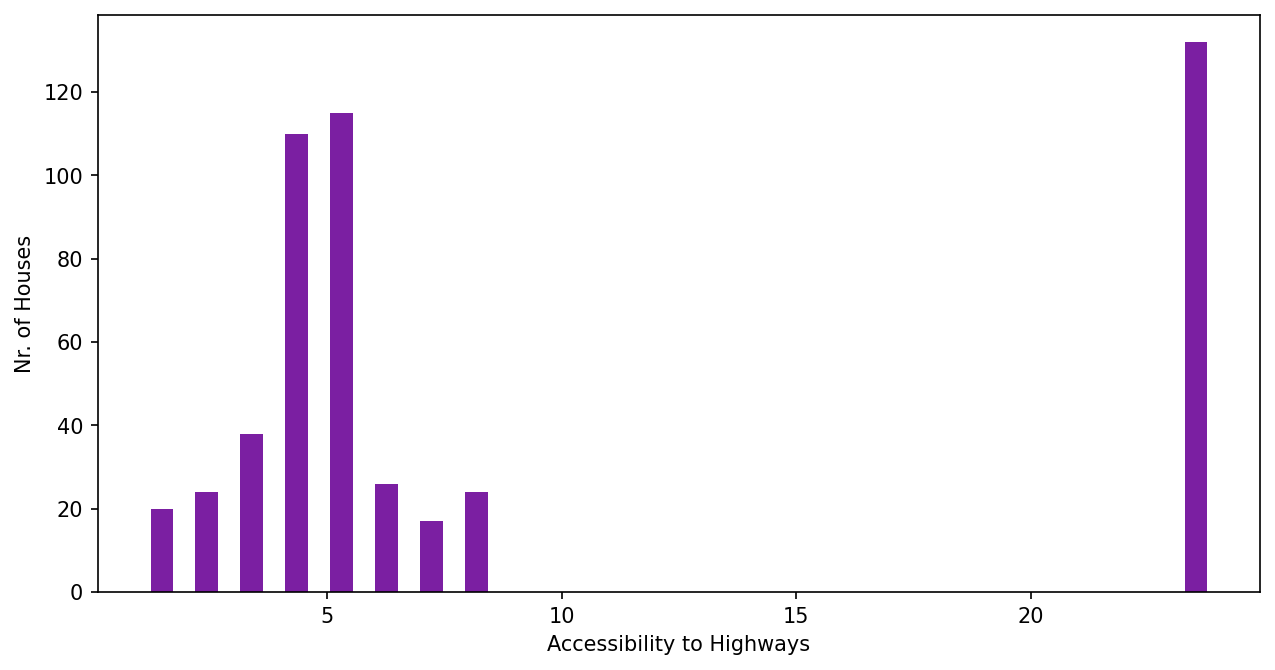

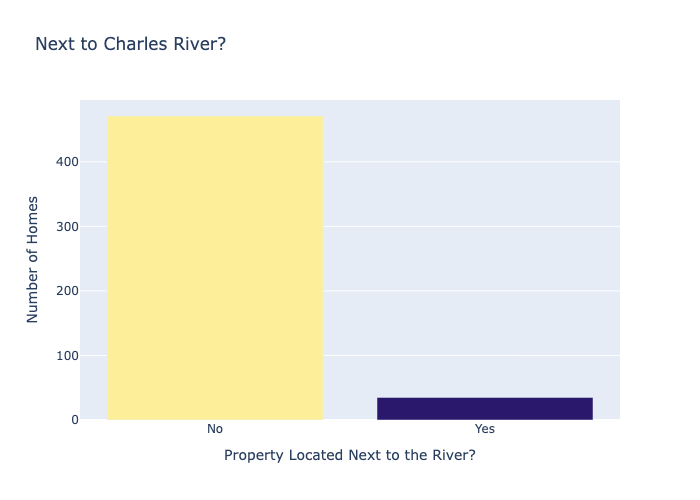

Real estate valuation is rarely driven by a single factor — price is the result of many neighbourhood characteristics interacting at once. A simple "price per square metre" heuristic ignores crime rates, school quality, pollution, and proximity to employment, all of which the data shows matter significantly. Without a principled model that accounts for all 13 features simultaneously, any estimate is likely to be badly miscalibrated for properties at the extremes — highly desirable river-adjacent homes or crime-heavy districts. The challenge was to build something that could hold all those variables in mind at once and still produce an interpretable, trustworthy output.

Solution

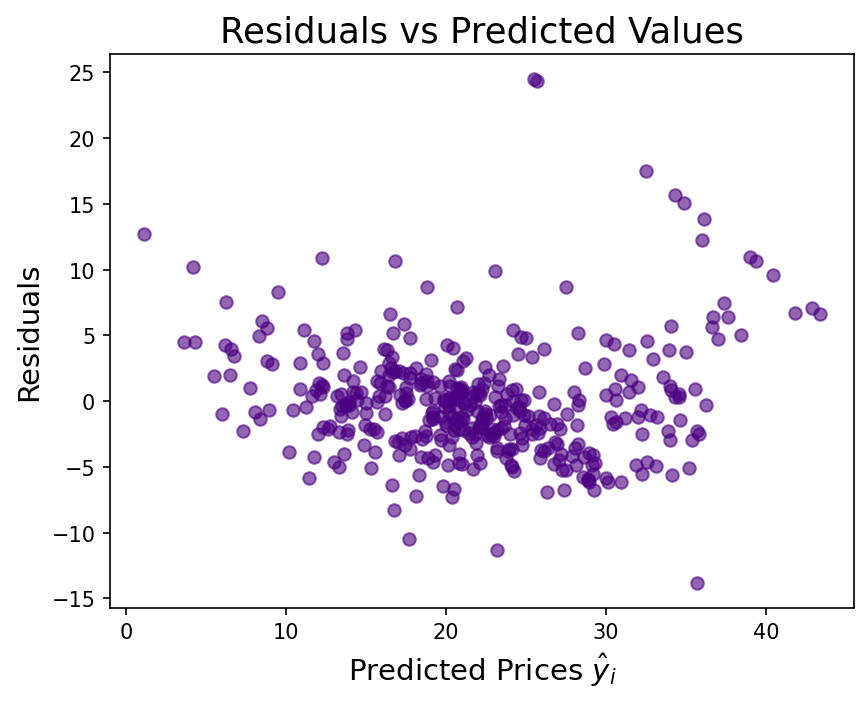

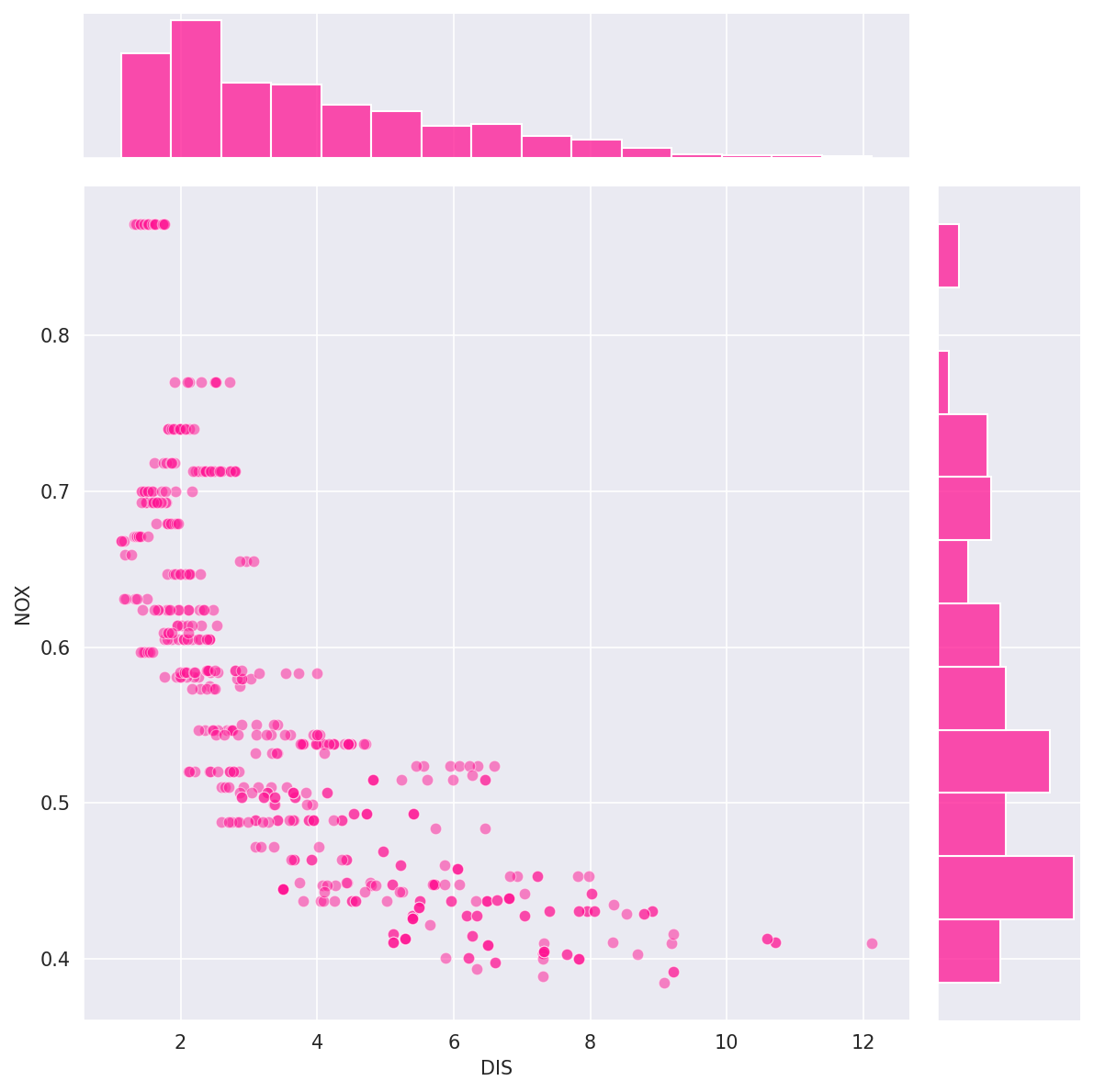

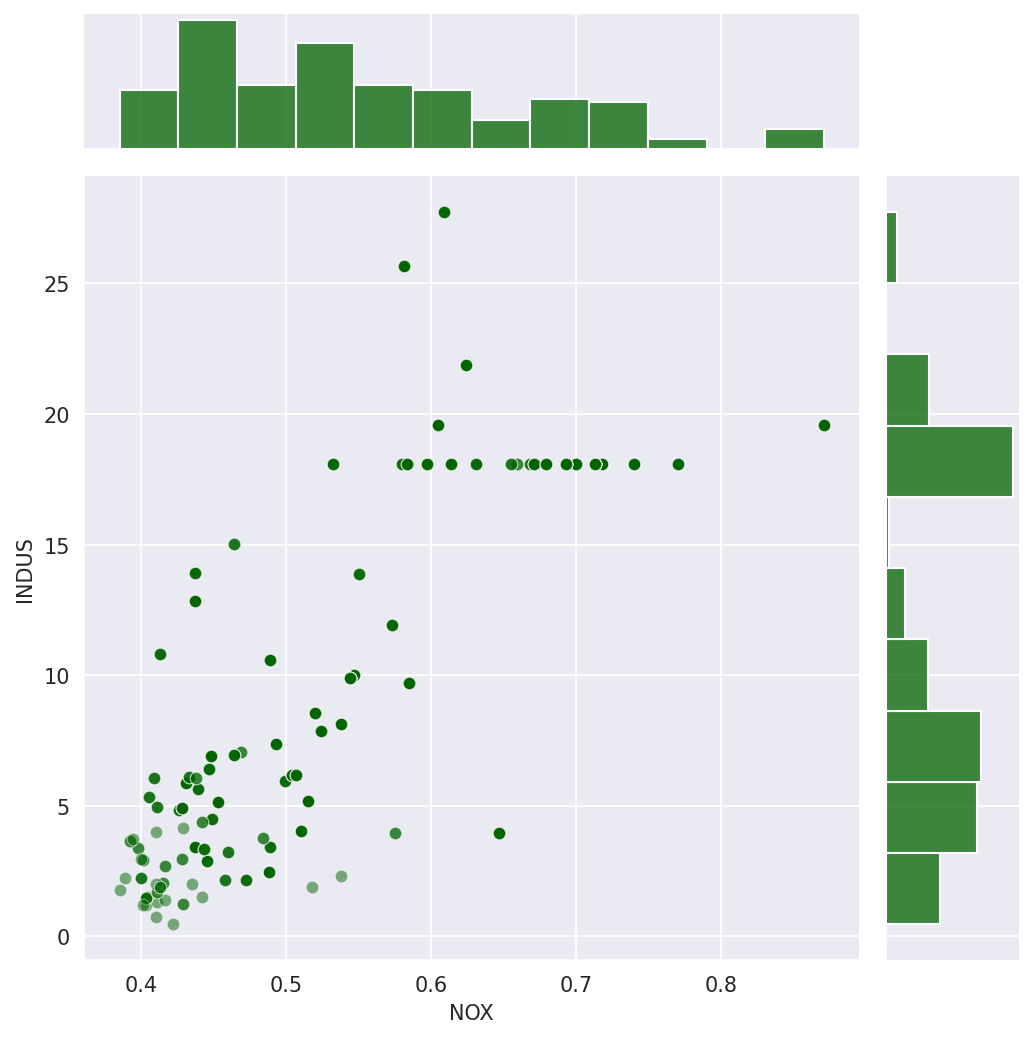

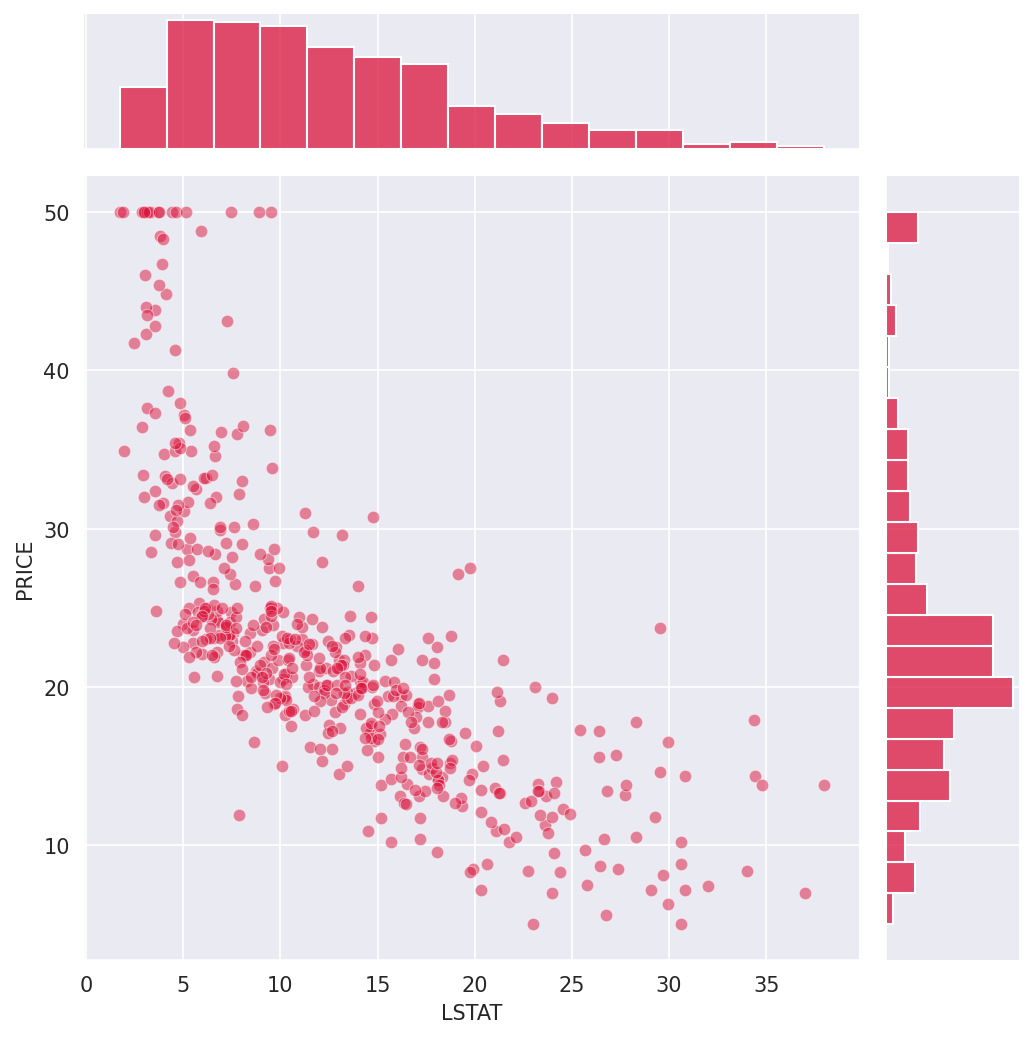

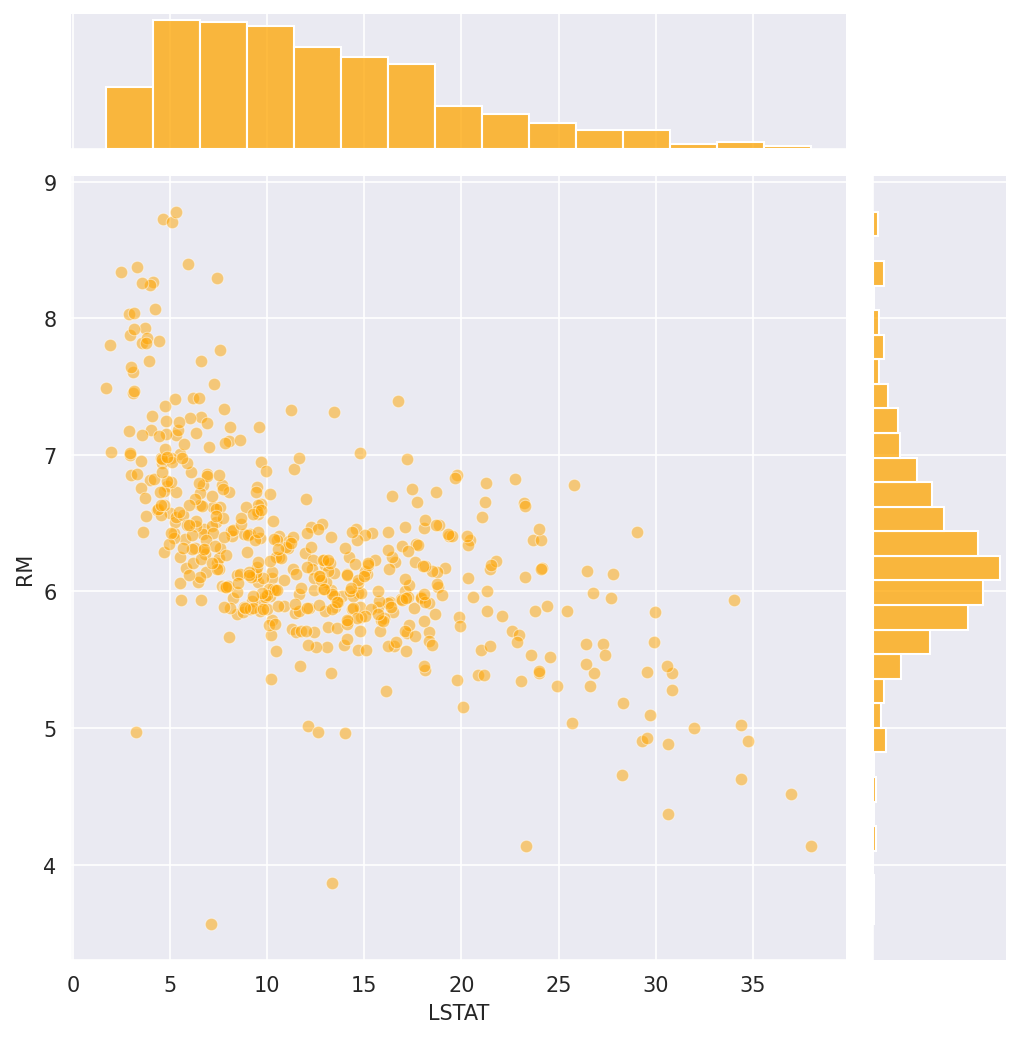

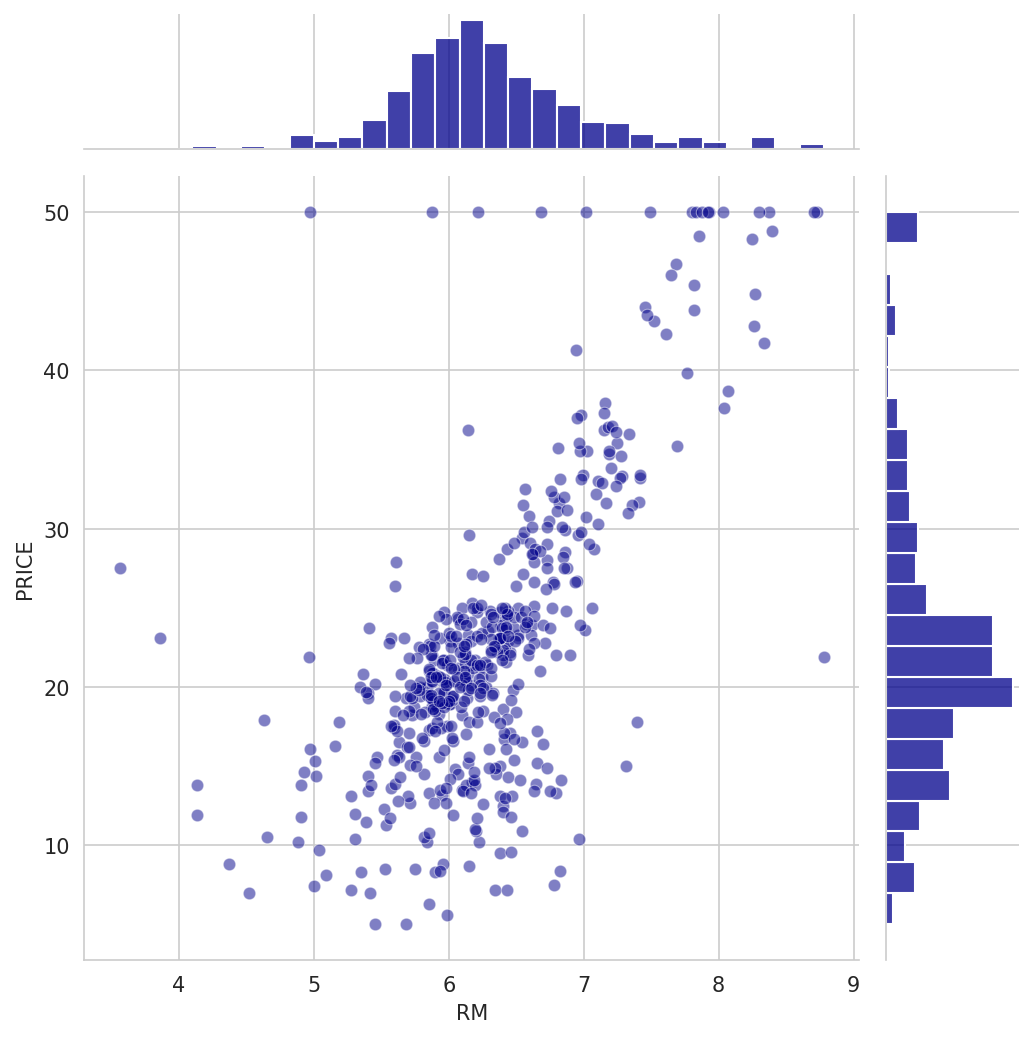

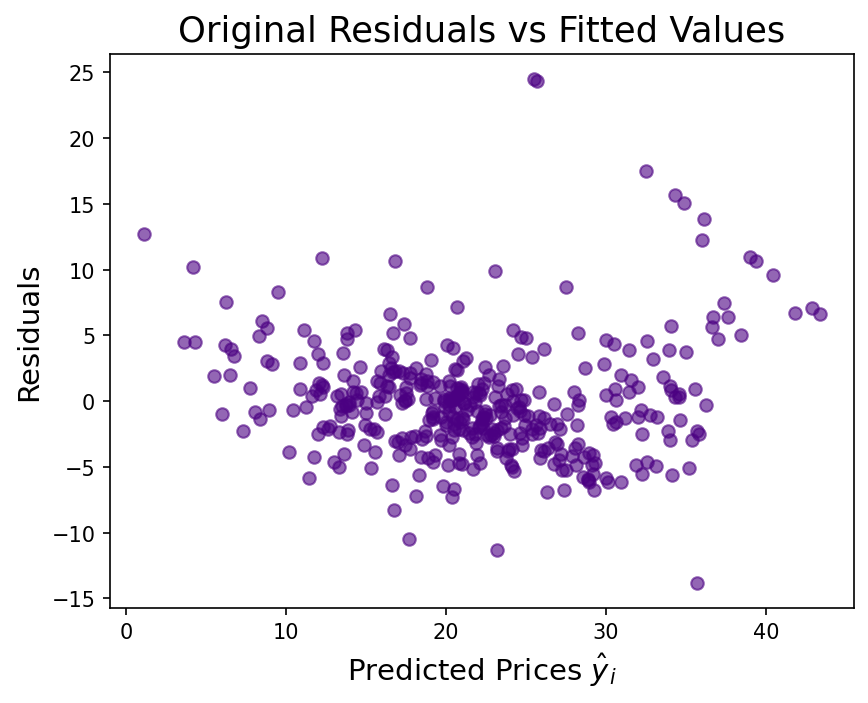

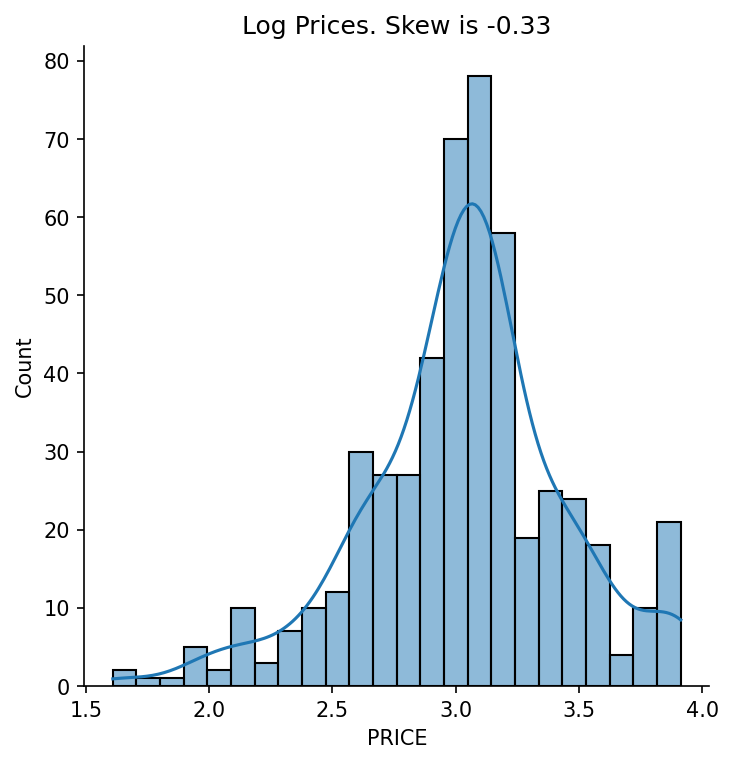

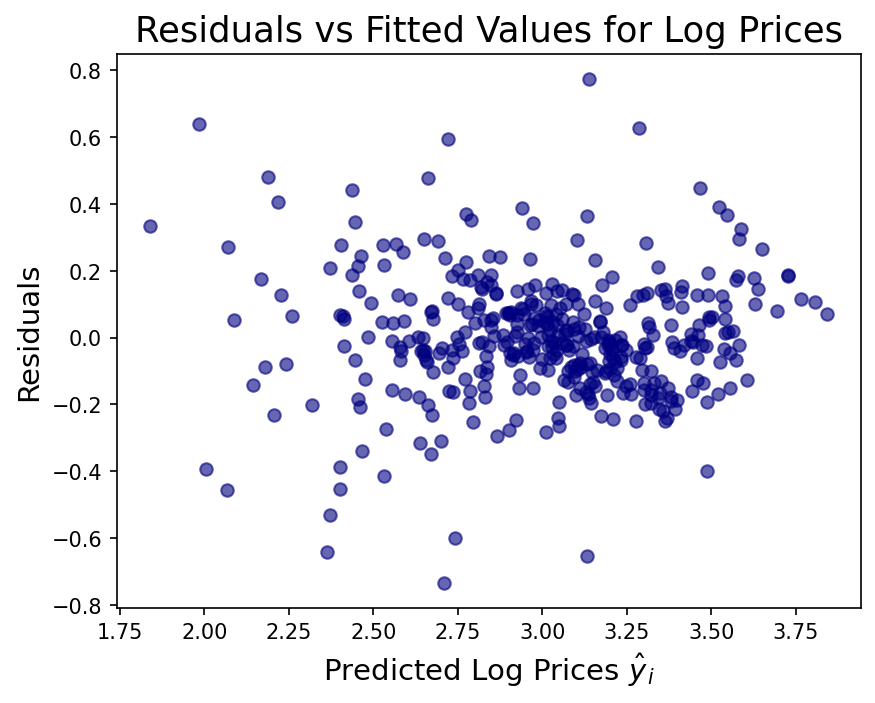

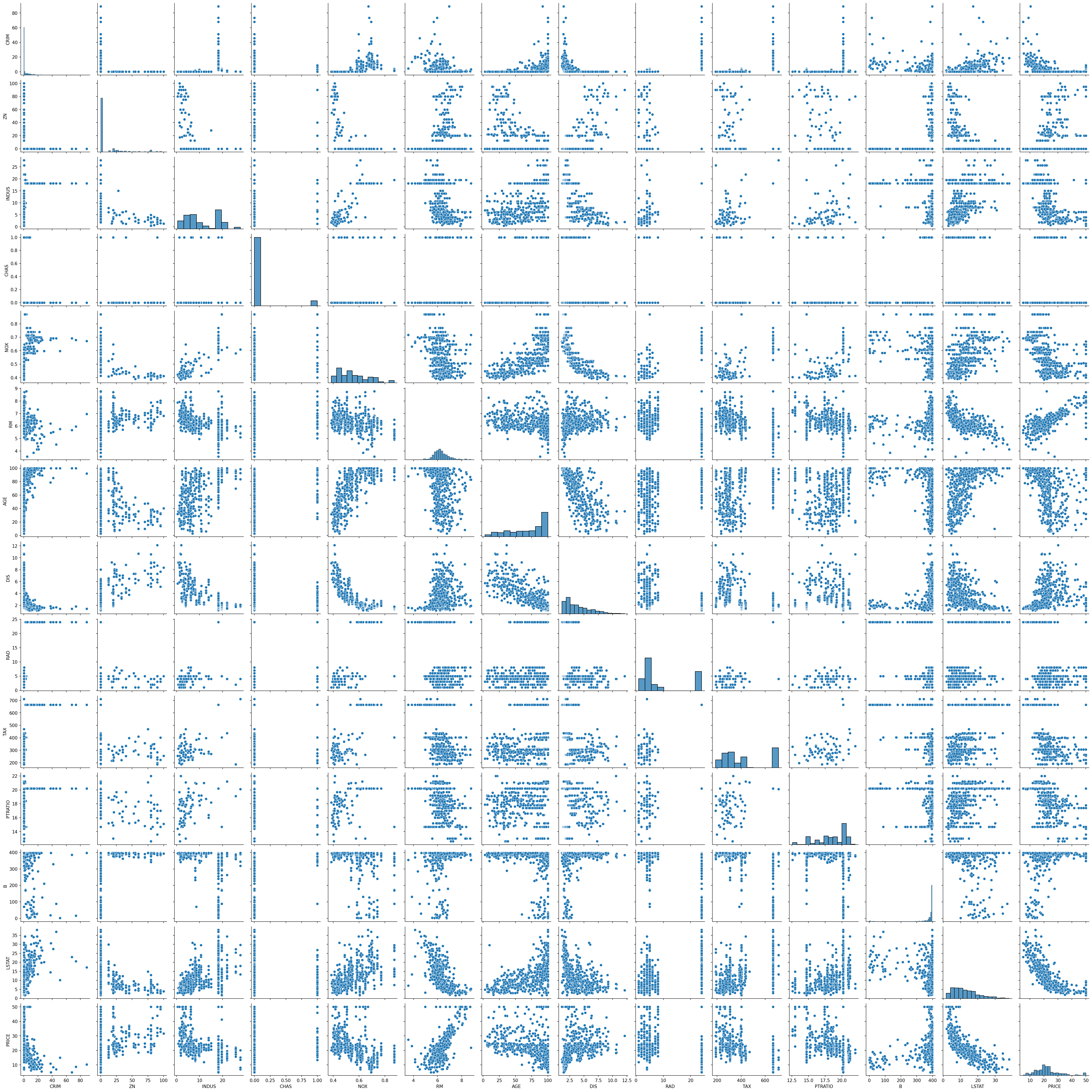

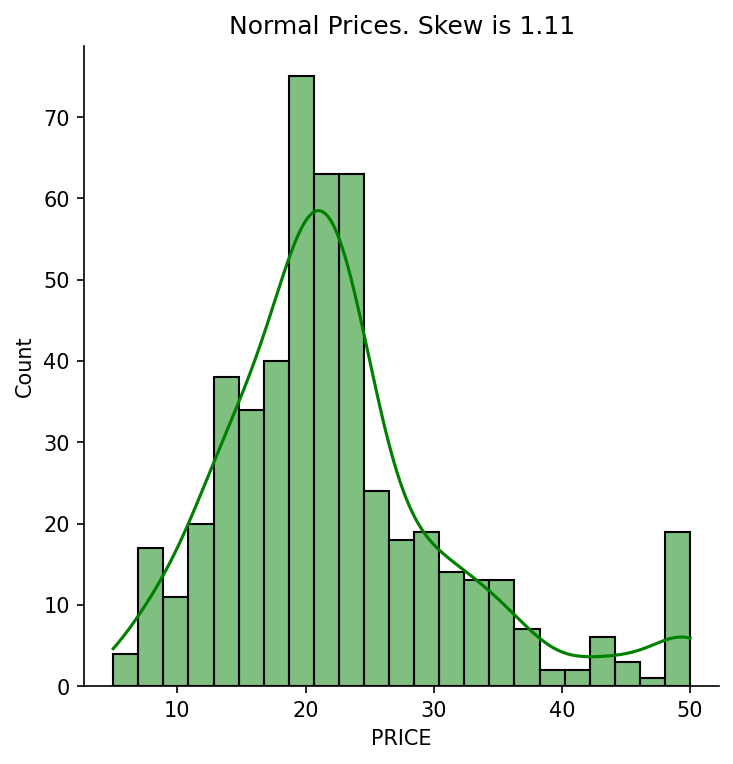

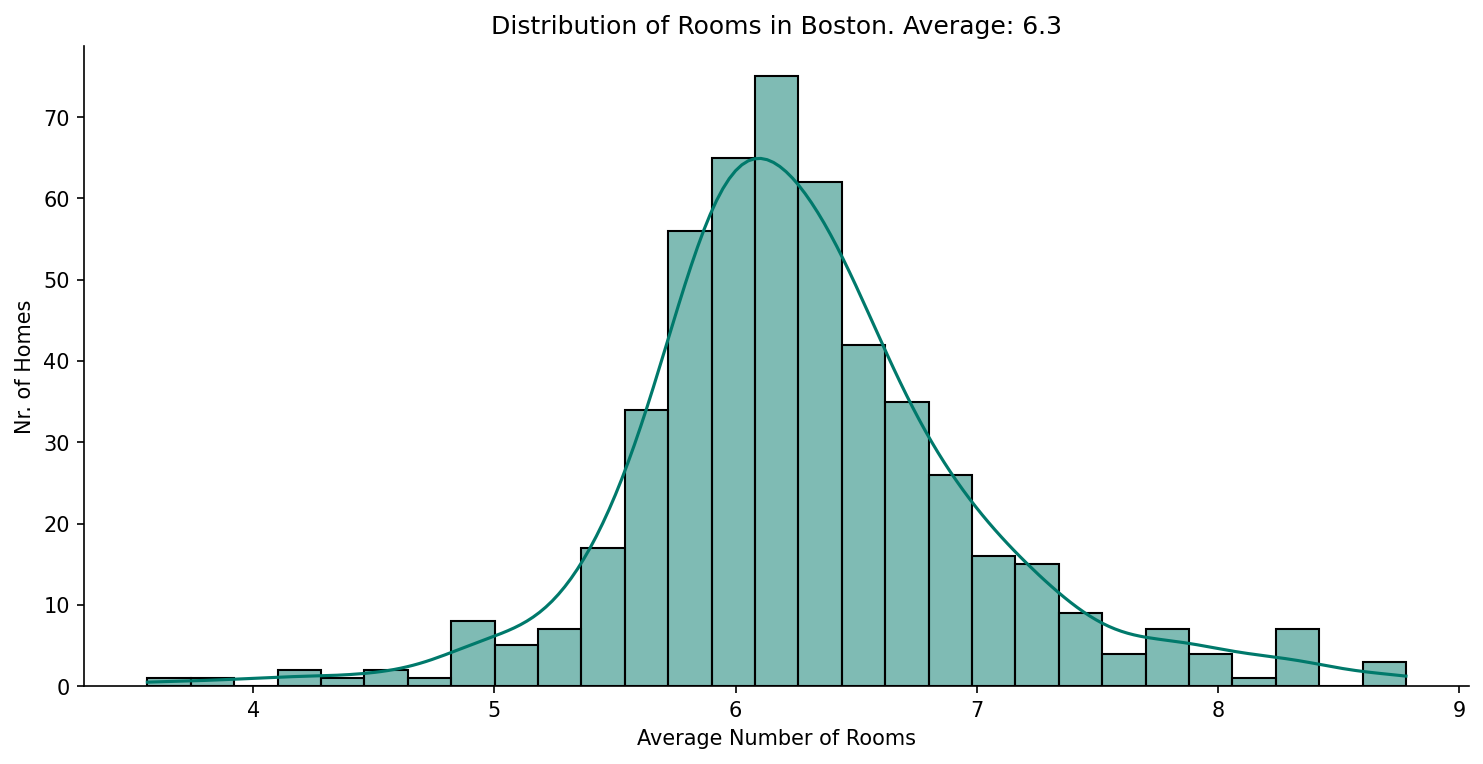

I fit a multivariable OLS regression using scikit-learn's LinearRegression on an 80/20 train/test split (random_state=10 for reproducibility). Before modelling I explored the data visually with seaborn jointplots (DIS vs NOX, LSTAT vs PRICE, RM vs PRICE) and a full pairplot to understand which relationships were linear and which were curved. After the first model showed positive skew and a fan-shaped residual pattern, I applied a log transformation to the target variable — fitting the model on log(PRICE) and inverting with np.exp() at prediction time. The final valuation function takes a property's 13 feature values, feeds them through the log model, and returns a dollar estimate, making the model directly usable as a decision tool.

Challenges

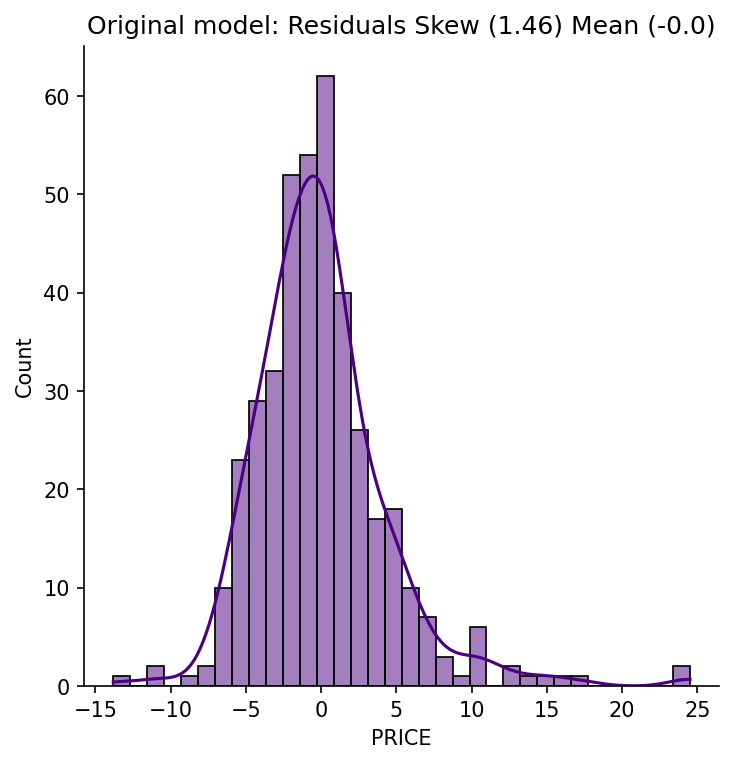

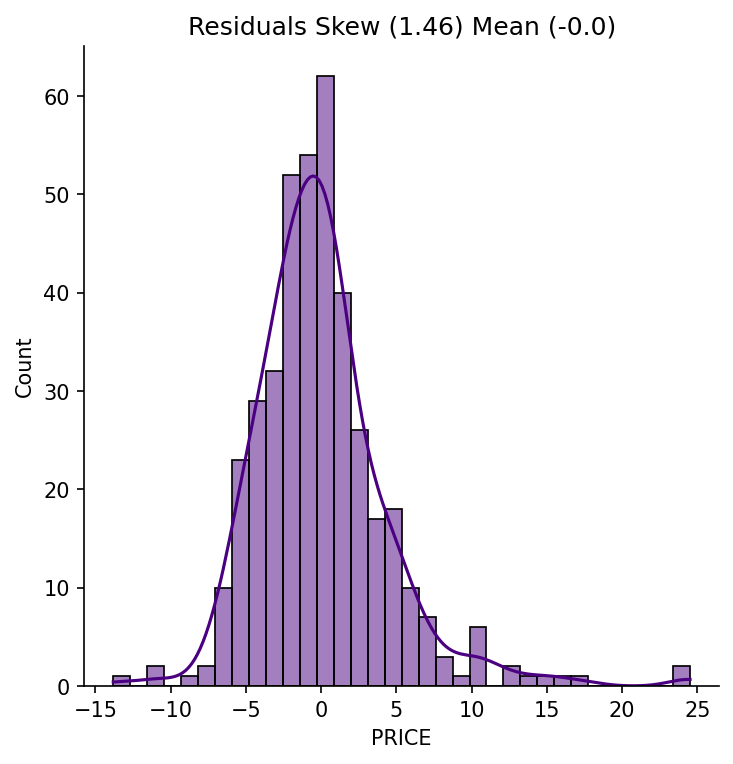

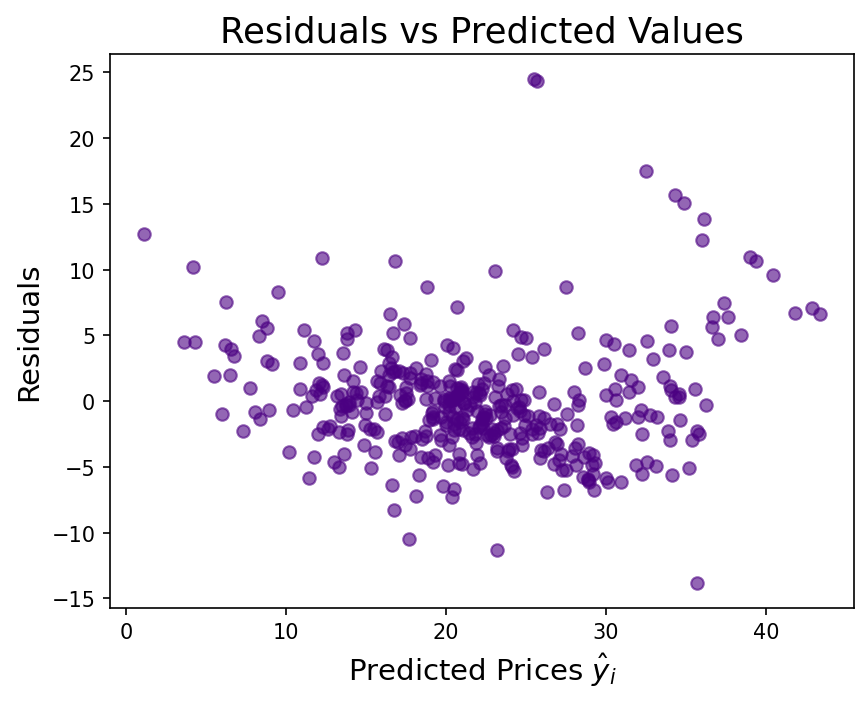

The trickiest part was diagnosing *why* the first model underperformed rather than just accepting its r² of 0.67. The residual vs. predicted plot showed a clear cone shape — variance increasing with predicted price — which is a textbook sign of heteroscedasticity. Recognising that this pointed to a distributional problem with the target (not the features) was the key insight. Applying np.log(PRICE) compressed the high-end values disproportionately, normalised the residuals, and lifted test r² to 0.74. Getting the valuation function right also required careful column ordering to match the training DataFrame exactly, since sklearn's predict() is positional, not named.

Results / Metrics

The log-transformed model achieves r² of 0.79 on training data and 0.74 on the held-out test set — a meaningful improvement over the naive first fit. Coefficient signs all make intuitive sense: rooms (RM) is the strongest positive predictor, adding roughly $3,000 per additional room, while poverty (LSTAT), crime (CRIM), and pollution (NOX) all suppress prices. The project taught me that residual analysis isn't a formality — it's the actual diagnostic that told me what to fix. Next time I'd also explore regularisation (Ridge/Lasso) to see whether shrinking noisy coefficients like B and ZN improves generalisation further.

Screenshots

Click to enlarge.

Click to enlarge.

Videos

No videos available yet.