CNN Food Classifier

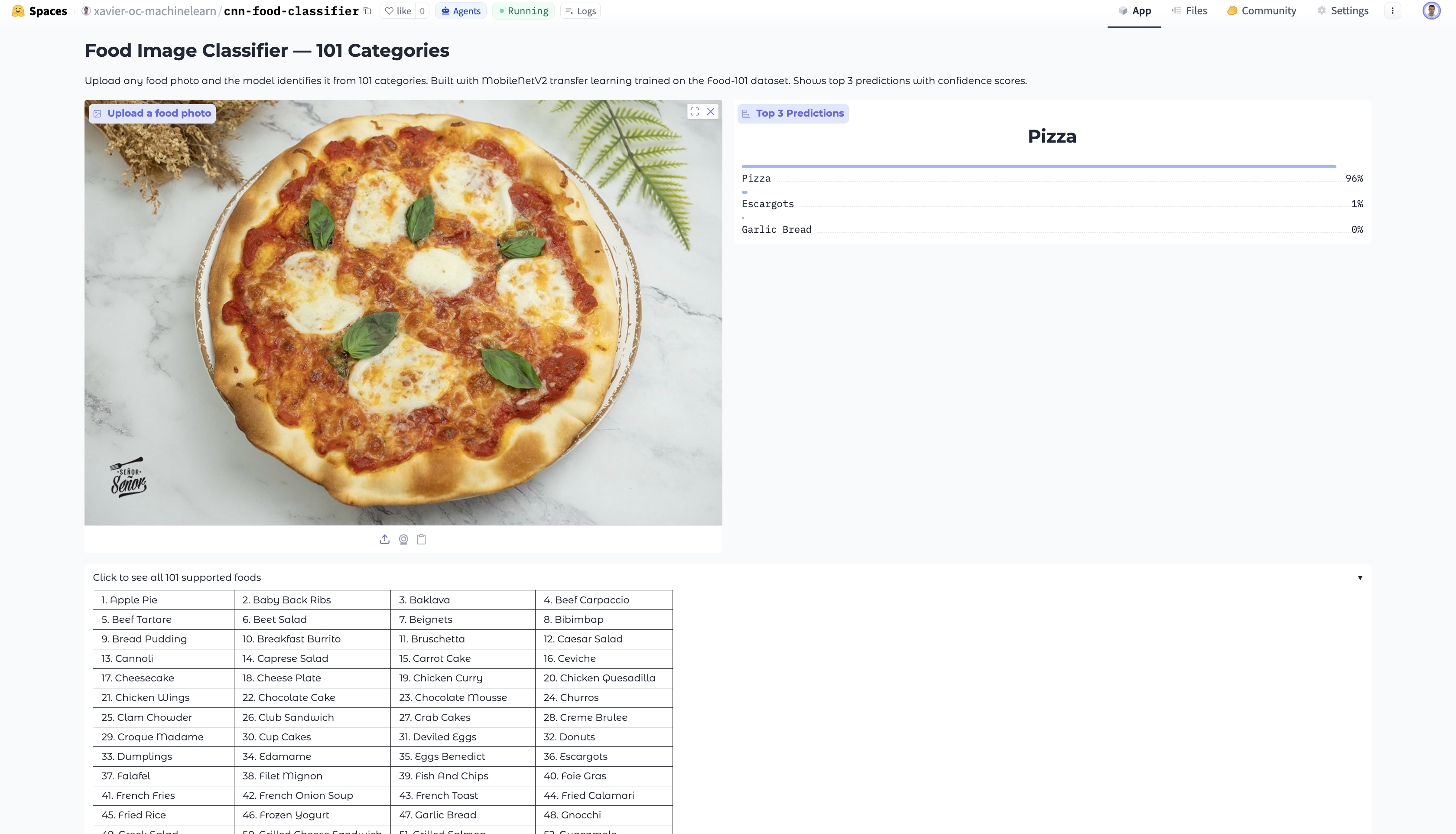

The model lives at Hugging Face Spaces and lets anyone upload a food photo to get instant predictions across 101 categories — from apple pie to waffles. It identifies the most likely dish and surfaces the top 3 predictions with confidence scores.

The architecture is built on MobileNetV2 pretrained on ImageNet, which already knows how to detect edges, textures, and shapes from 1.2M images. Rather than training from scratch — which would require far more data and compute — I reused those learned features and trained only what needed to be food-specific.

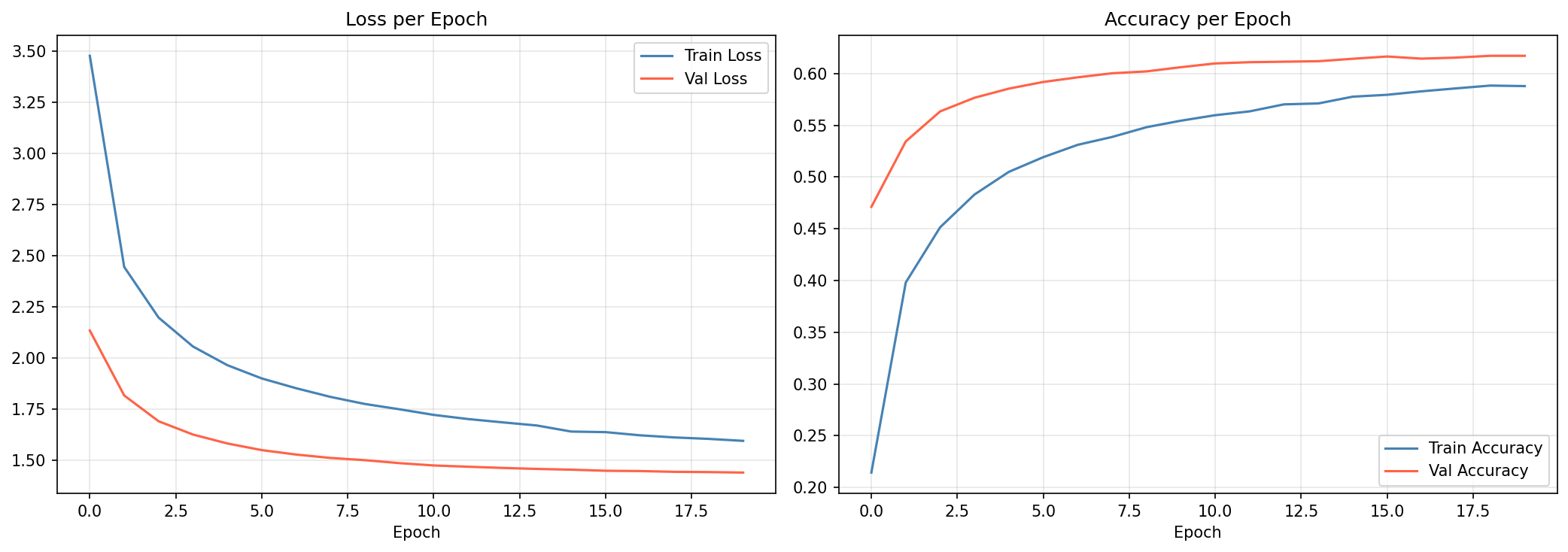

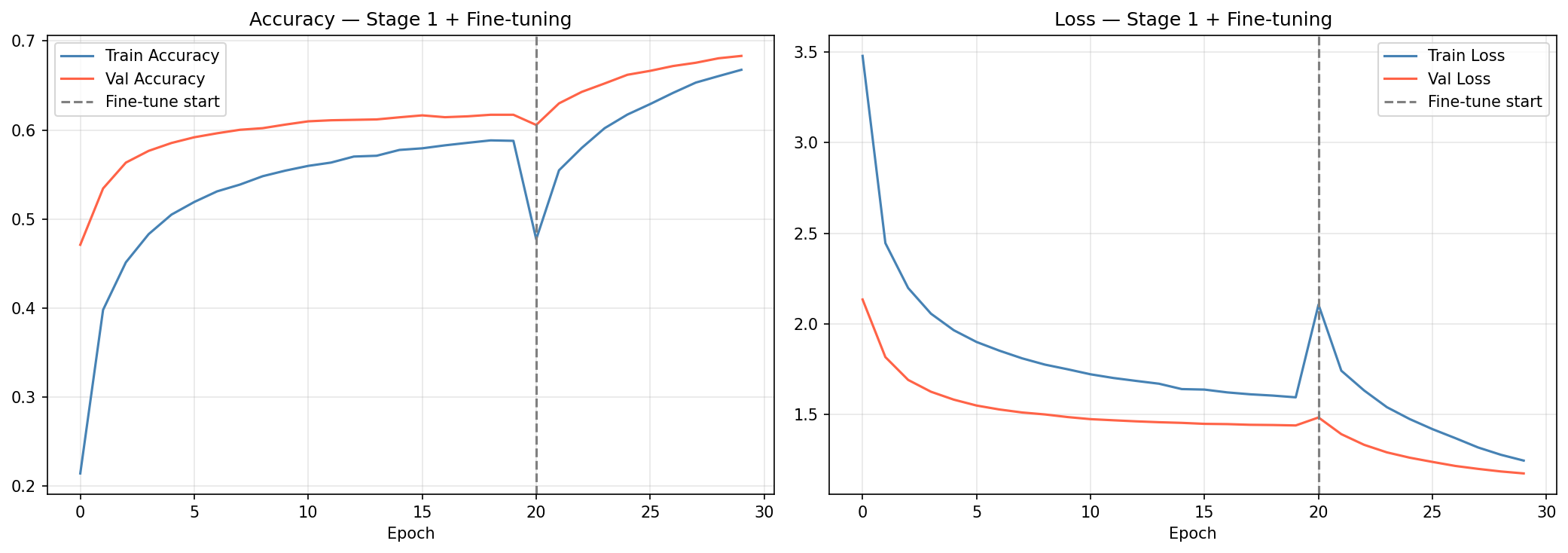

Stage 1 freezes the entire MobileNetV2 base and trains a lightweight classification head on top: GlobalAveragePooling → Dense(256, ReLU) → Dropout(0.3) → Dense(101, softmax). This converges quickly and reaches ~62% validation accuracy in 20 epochs at lr=0.0001.

Stage 2 unfreezes the top 30 layers of the base and fine-tunes the full model end-to-end at lr=1e-5 — gentle enough to refine the pretrained weights toward food-specific features without overwriting them. This pushes validation accuracy to ~68.3%.

The training pipeline uses tf.data with mixed float16 precision throughout for ~30% faster throughput on Apple M1 Metal GPU. The app is deployed as a Gradio interface on Hugging Face Spaces — users upload a photo, the model preprocesses it to 224×224, normalises pixel values, and returns the top 3 predicted classes with confidence scores.

Quick Facts

Overview

Problem

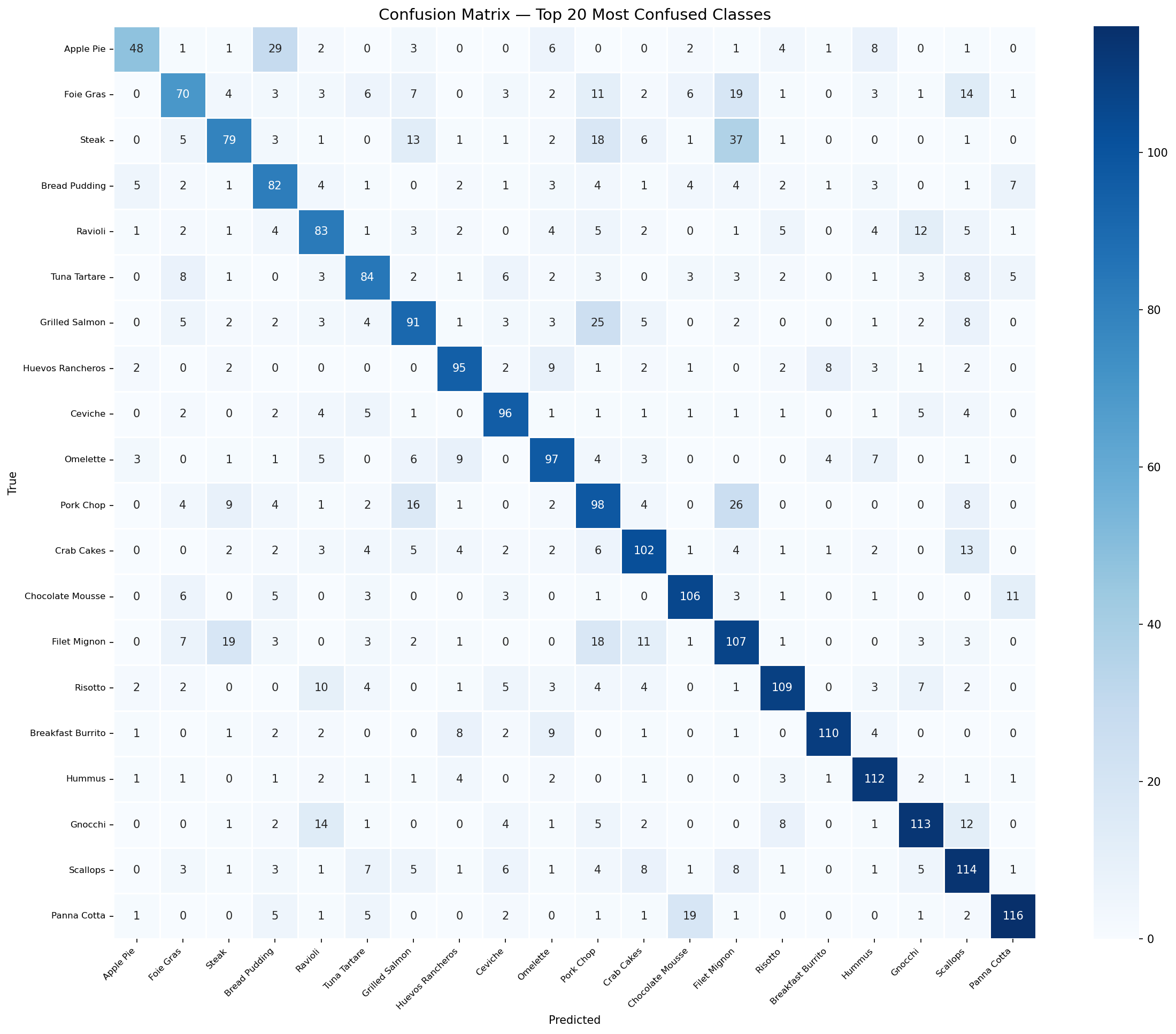

Training a food classifier from scratch across 101 categories requires far more data, compute, and time than the Food-101 dataset alone can support — especially for visually similar pairs like beef tartare vs tuna tartare or spaghetti bolognese vs carbonara where even humans struggle.

Solution

Transfer learning from MobileNetV2 gave the model a head start — 1.2M ImageNet images worth of visual knowledge, frozen and reused as a feature extractor. Fine-tuning only the top layers in Stage 2 let me specialise those features for food without losing what was already learned.

Challenges

- TensorFlow 2.16 incompatible with Python 3.13 on Hugging Face Spaces — resolved by pinning python_version to 3.11 in the Space config.

- Hugging Face's new xet binary storage requirement rejected PNG plot files via LFS — resolved by excluding plots from the Space repo (not needed for inference).

- Food-101 at 224x224 float32 requires ~14GB RAM — .cache() had to be omitted from the tf.data pipeline to avoid crashing on a 16GB machine.

- Gradio 6.0 moved theme from Interface constructor to launch() — fixed deprecation warning by updating the call signature.

Results / Metrics

- 68.3% validation accuracy on Food-101 (101 classes, 25,250 val images)

- Best performing classes: Edamame 96%, Waffles 94%, Sushi 93%, Pizza 92%, Hot Dog 91%

- Hardest classes: Chocolate Mousse 43%, Beef Tartare 47%, Tuna Tartare 49%

- Live demo deployed on Hugging Face Spaces with top-3 predictions and confidence scores

- Full training notebook with inline outputs and visualisations committed to GitHub

Screenshots

Click to enlarge.

Click to enlarge.

Videos

No videos available yet.